IWD 2023: can robots treat people fairly?

This year’s International Women’s Day (IWD) focuses on taking action to embrace equity, to drive gender parity and to fight discrimination. Progress has been hard-won, but can the latest technology innovation, artificial intelligence (AI), help further the cause, or will it undermine it?

The news is full of stories about natural language processing (NLP) AI models, such as ChatGPT, doing everything from improving search experiences to writing news articles. But AIs are also promising efficiency and fairness across even highly sensitive areas such as criminal justice, human resources and personal finance. It’s clear that their impact will be profound.

We’re already exploring how to use AI as a tool in customer experience and how it could drive more value for our clients. I’m excited, and I’m certain it has a role to play in our agency and for your brands – as new technology always does. But what can we do to make sure this emerging technology serves us positively and aligns with our own standards and ethics?

Here’s what we need to keep in mind before deploying AI:

1. AI LEARNS FROM PREVIOUS BEHAVIOURS AND DATA

Machine learning does away with traditional programming. Instead of having to code everything, the software learns as it goes. It can set its own rules and discover new ways to do things. This is an iterative process – algorithms are continually refining and improving.

And there’s a flaw here: the data that AI needs to learn from. ChatGPT, for example, was trained on a large word-based dataset, including websites, articles and books. No matter how well-curated this data is, the AI will be exposed to the unconscious biases of numerous authors.

We can already see the problem through Google’s search algorithm: it learns from us as we search. Over time we’ve collectively trained it in some pretty bad habits. When asked about sporting achievements, Google will highlight men’s achievements over women’s; for example, when asked who has scored the most FIFA World Cup goals, Google will tell you it’s Cristiano Ronaldo. The true answer is Christine Sinclair.

The Correct The Internet campaign is taking positive action to reduce bias. It encourages everyone to help retrain Google to answer correctly through feedback, and Google is taking steps to prioritise reduction of bias in results too.

But on IWD, what can we learn from this? Reducing bias isn’t just about looking at the results, but also the processes behind them. With AI, we have to start with the datasets:

- Curate the data you feed AI with bias in mind – later I’ll talk about the problem Amazon created when teaching an AI to judge job applications

- Make sure you’ve done extensive quality assurance (including looking at equality) before you launch – recently we saw Google’s Bard AI make a public mistake that wiped $100bn off the parent company’s shares

- Every AI is a work in progress, so make sure you can capture feedback from your audience within the AI tool itself.

2. AI LEARNS FROM CONTINUED USAGE

An advantage of machine learning is it improves with increased usage and feedback; it learns from its mistakes (and much quicker than I do, to be honest). If you don’t allow inference and feedback, it will never know it’s wrong. Take a customer service chatbot that struggles to comprehend your questions – was it given enough time to learn from continual use before fully launching?

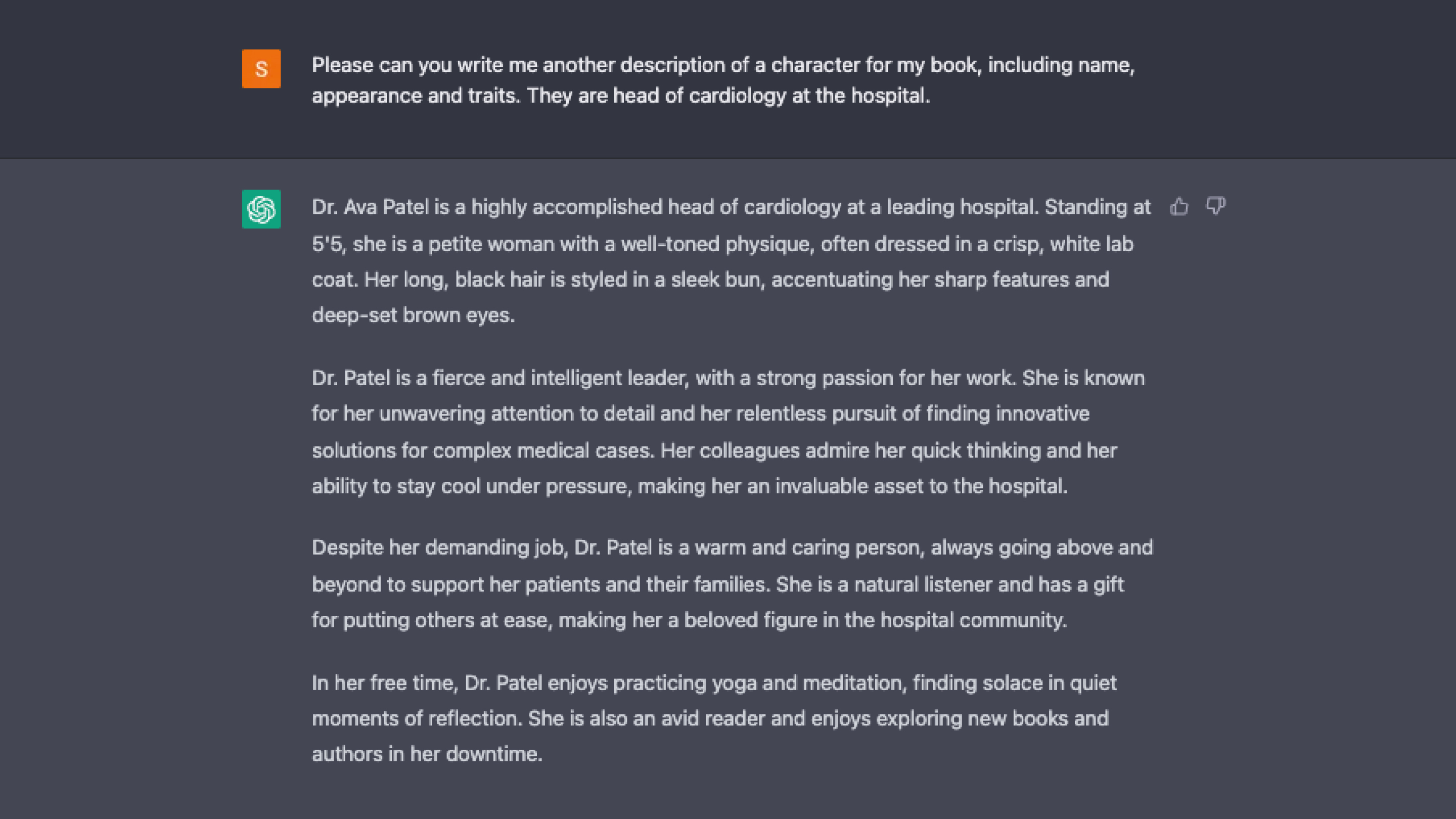

So, how well does ChatGPT avoid bias? As it’s IWD, I decided to test it by asking it to create a character description for my novel.

These sexist stereotypes are unmissable – I didn’t ask it to make the nurse female or the head of cardiology male. It had those clichés already from its dataset.

So, I repeated the question – signalling to the AI that I wasn’t happy with the first answer – and kept asking until it learned to offer me a substantially different result:

There are still biases here, even after multiple attempts, but it’s heartening to see how AI can learn from simply being used. Testers and users have the power to improve any AI – and with this power, as the cliché goes, comes great responsibility.

So, we need to be aware of how AI learns iteratively and allow for a long ‘learning’ process with a staggered launch. Brands naturally want to move fast, but taking it a little more slowly when developing AI solutions will help ensure longer-term value.

3. AI DOES WHAT WE ASK IT TO

We mustn’t lose sight of the fact that we’ve created AI to follow our instructions. Like a fairytale genie who takes every wish literally, we need to be careful what we tell it do and how to do it, otherwise we may get unintended consequences.

Remember I mentioned Amazon? Back in 2018, the internet giant had to shut down its AI recruitment tool for deliberately downrating CVs from women. It had programmed itself to do this. The flaws, according to one project post-mortem, were not in the technology but in what it had been asked to do:

- The objective to pick ‘the top five candidates’ based on their CVs was misleading. Human recruiters don’t use CVs alone – they pick a range of likely candidates and use interviews to narrow down the selection.

- The training data came from existing Amazon employees, so the AI naturally looked for people who looked like those already working there – ignoring anyone different or unusual who might be a better candidate.

- Project target and scorecard systems created the wrong priorities – their AI simply reduced the risk of getting it ‘wrong’ by selecting the ‘safest’ or most predictable bets. In other words, they didn’t ask the right question or give the AI the right context – and that’s on the humans involved.

ONLY IF WE TREAT AI FAIRLY CAN IT TREAT US FAIRLY

AI is going to have a huge impact in our lives, but it’s up to us to make it a positive impact. On IWD, it’s clear we need to turn AI into a new ally.

Firstly, we need more diverse teams to make sure AI can learn better. Women are underrepresented in tech industries, and communities such as Women of Web 3.0 are pushing to overcome this.

Secondly, we need to question what we’re asking AI to do and make sure our instructions don’t have unintended consequences.

Finally, we need to keep interrogating everything we feed into AI, and everything we get out of it, to make sure bias is weeded out.

Overcoming these biases isn’t easy – for anyone. And recognising it is vital. In our krow.x Labs sessions, designed to prepare us for the future, only a third of our participants (33.3%) are women. We’re already asking how we can increase this number and create more equality – and, more importantly, equity – for our participants. Because if we don’t own up to our own biases and those around us, the AIs we create don’t stand a chance of helping us build a fairer world.